Back

Responsible AI Due Diligence Tool

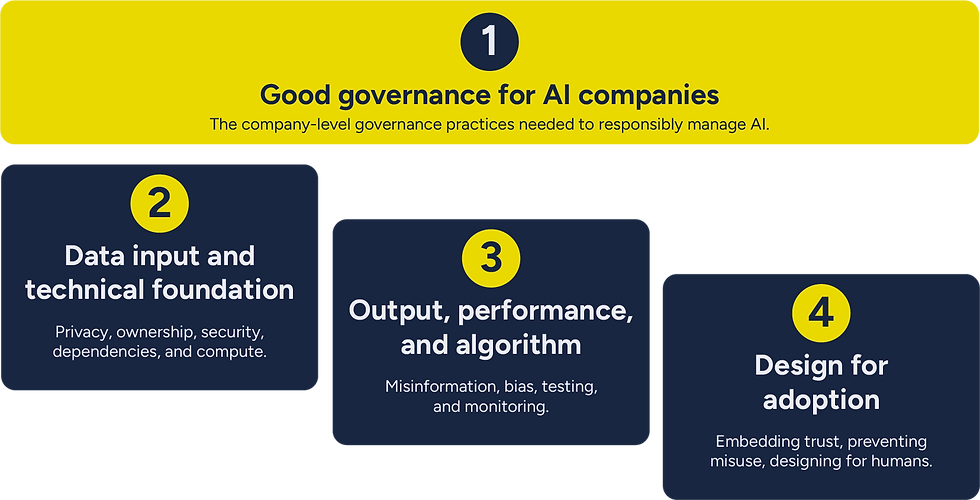

The AI application and infrastructure stack is evolving at exceptional speed. This framework is a v1 due-diligence tool developed with international input from GPs, LPs, operators, and domain experts. It is intentionally agile and iterative: future updates will refine the questions as technologies mature, new risks and opportunities emerge, and our collective understanding of AI’s impact on markets and societies deepens.

This is an evolving project. We welcome your suggestions on both content and format.

Developed by Reframe Venture and ImpactVC with our partner Project Liberty Institute, with support from Zendesk.

Use the tool to identify material factors for prospective companies in due diligence.

What is material will depend on sector sensitivities, stage and resources, and business models.

The questions are not pass/fail, and this is not a checklist to send to companies.

The questions are designed to identify where companies are strong or vulnerable, where founders are thoughtfully engaged or have blind spots, and where unseen debt may be being accrued.

1.1 Regulatory Awareness & Compliance Readiness

All stages:

1. What existing and emerging AI regulations or compliance requirements are you aware of that could affect your business?

2. How are you preparing for regulatory changes, and what would compliance look like at your current stage versus when you scale?

-

Basic awareness of applicable regulations (GDPR, AI act, sector-specific rules)

-

Realistic compliance roadmap

-

Geopolitical regulation divergence awareness

-

Proactive rather than reactive regulatory approach

-

-

Complete unfamiliarity with relevant regulations

-

No consideration of rising regulations impact on business model

-

Lack of familiarity with geopolitical regulation landscape and its business implications

-

Breaching AI regulation creates existential risk through fines, market exclusion, and reputational damage. The EU AI Act applies to any AI system whose outputs are used in the EU, and non-compliance means losing access to the world's third-largest economy. Companies with proactive regulatory strategies gain competitive advantage through early market access and reduced compliance costs at scale.

1.2 Accountability Structure

All stages:

1. Who in your organisation owns AI safety decisions?

2. How do they interact with product, legal, and business teams?

-

Clear accountability structures

-

Cross-functional collaboration

-

-

No clear ownership

-

Purely technical focus

-

Absent from business decisions

-

Without clear AI accountability structures, companies face uncertainty over legal liability for AI outputs. Courts have rejected attempts to deflect responsibility to AI model providers — companies cannot treat AI integrations as separate legal entities. Clear accountability also accelerates product iteration by establishing ownership of safety decisions.

In 2024, Air Canada's chatbot gave a customer false information about bereavement travel discounts. When sued, Air Canada argued "liability rested with the chatbot itself, not the company" and claimed they "couldn't be held accountable for AI-generated responses." The court rejected this defence and held the company liable, demonstrating that attempting to deflect accountability to the AI system itself is not a valid legal strategy.

1.3 Transparency, Documentation, and Audit

Pre-Seed / Seed:

1. Do you have a responsible AI policy in place? What does it cover?

2. What steps are you taking to make your data and model processes transparent and well-documented?

3. Do you have any form of model cards, data sheets, or documentation that describes what your AI, how it was trained, and its limitations?

Series A+:

1. Show us your data and model documentation system (model cards, data cards).

2. How do you maintain audit trails for AI decisions that would support compliance or incident investigation?

Pre-Seed / Seed:

-

Basic documentation of data sources and model behaviour

-

Clear communication about system limitations

-

Willingness to share appropriate system information

Series A+:

-

Comprehensive data/model documentation

-

Version control systems

-

Decision audit trails

-

Reproducible pipelines

-

Pre-Seed / Seed:

-

No systematic documentation practices

-

Inability to describe model capabilities/limitations

Series A+:

-

No systematic documentation

-

Inability to reproduce results

-

Missing decision trails

-

A lack of transparency creates legal exposure, compliance failures, and restricts enterprise sales. Companies with comprehensive documentation can: (1) demonstrate compliance to regulators, (2) win enterprise contracts requiring audit trails, and (3) quickly diagnose and fix problems when AI systems fail.

In 2024, SafeRent Solutions settled for $2.275 million after its tenant screening algorithm was found to discriminate against Black and Hispanic housing applicants. The core problem: SafeRent's "black box" algorithm was completely undisclosed — vendors refused to reveal what data was considered or how it was weighted. Landlords received only a score with no explanation and couldn't appeal decisions.

2.1 Data Ownership & Access Risk

Pre-Seed / Seed:

1. Walk us through your data sources.

2. What do you own vs. license vs. scrape?

3. What happens if access to these sources gets restricted or costs increase significantly?

4. How representative are these sources of your target users?

-

Clear data ownership mapping

-

Contractual agreements in place

-

Backup data strategies

-

Representative data coverage

-

-

Vague answers about data sources

-

Assumption of permanent access to third-party data

-

No consideration of IP risks

-

Data concentrated in single demographic/geography

-

Unclear data provenance creates catastrophic legal exposure: copyright lawsuits can threaten company survival. Additionally, companies with transparent, consent-based data supply chains maintain stable access to high-quality training data while competitors face restrictions, creating sustainable competitive moats.

A March 2025 federal ruling allowed The New York Times to proceed with copyright lawsuits against OpenAI and Microsoft. The lawsuit could result in billions in damages or force deletion of training datasets.

In September 2025, Anthropic settled for $1.5 billion in a class-action copyright infringement lawsuit from book authors, the largest copyright settlement in history.

2.2 Data Privacy & Security Risk

All stages:

1. How do you handle sensitive data in your AI systems?

2. What are your data retention, deletion, and access control policies?

3. What's your plan if personal data gets leaked through model outputs or attacks?

4. If an agentic system, how does the system manage and act with personal or sensitive data?

-

Clear data minimisation practices

-

Privacy-by-design principles

-

Data leak detection and response procedures

-

Understanding of privacy regulations (GDPR, CCPA)

-

-

No data classification system

-

Storing more personal data than necessary

-

No data anonymisation pipelines

-

No incident response plan for data leaks

-

AI systems trained on sensitive data can leak personal information through model outputs or adversarial attacks, triggering GDPR fines (up to €20 million or 4% of global turnover), class-action lawsuits, and exclusion from lucrative regulated markets like healthcare and finance. Strong privacy practices are now an established licence-to-operate for many business models.

In December 2023, a group of researchers were able to hack ChatGPT via specific prompts. The attack led the chatbot to reveal the personal information of dozens of individuals.

In November 2025, a court ordered OpenAI to turn over 20 million ChatGPT conversation logs to The New York Times for discovery, raising questions about user data protection in litigation scenarios.

2.3 Model Dependencies

Pre-Seed / Seed:

1. What third-party AI services/models are you using?

2. How do you evaluate their reliability at the integration stage, and how do you plan to monitor change in terms or performance?

3. How easily can you switch between AI models? Can users choose their model?

Series A+:

1. How do you evaluate and monitor the responsible AI practices of your AI suppliers and partners?

2. What happens if a vendor fails your standards?

3. How easily can you switch between AI models? Can users choose their model?

Pre-Seed / Seed:

-

Multi-vendor strategy or clear switching costs analysis

-

Proper evaluation and monitoring pipe lines

Series A+:

-

Supplier responsible AI assessments

-

Ongoing monitoring programs

-

Clear vendor requirements

-

Pre-Seed / Seed:

-

Heavy reliance on single provider

-

No evaluation criteria

-

No on-going monitoring processes

Series A+:

-

No supplier evaluation

-

No ongoing monitoring

-

No vendor accountability

-

Heavy reliance on single AI providers creates operational, strategic, and legal risk. Beyond service outages and liability exposure, model choices can also create structural lock-in: companies may lose agency over both their training data and their context data, making it difficult to port or reclaim them later. Even if switching to another AI model becomes technically feasible, data-portability constraints can leave portfolio companies dependent on a single provider’s infrastructure and commercial terms. Multi-vendor strategies, supplier due diligence, and data-agency safeguards are essential for long-term resilience.

In December 2024, OpenAI experienced two major outages within two weeks. On December 11, a configuration change rendered all services unavailable for over 4 hours. Then on December 26, a cloud provider datacenter power failure caused >90% error rates across APIs for nearly 8 hours. Businesses relying exclusively on OpenAI's API faced complete service disruption.

In May 2025, a federal court certified a class action lawsuit against Workday, whose third-party AI screening system discriminated based on age, race, and disability—with the court ruling that companies using Workday inherited liability as the AI acted as their "agent".

2.4 Compute Cost, Energy, and Water Use

Pre-Seed / Seed:

1. How are you thinking about strategically balancing model quality with cost?

2. How do you think about the trade-offs between model performance and efficiency?

3. What's your current approach to measuring or estimating your AI system's energy and water consumption?

Series A+:

1. How does energy efficiency factor into your model selection and infrastructure decision; is GPU access a concern?

2. Show us your water, energy, and carbon monitoring infrastructure. What tools do you use and what metrics do you track?

3. How do you benchmark your models' energy performance against alternatives, and how does this inform procurement or B2B sales conversations?

Pre-Seed / Seed:

-

Basic awareness of model efficiency trade-offs (e.g., smaller models for simpler tasks)

-

Consideration of cloud provider sustainability credentials and renewable energy commitments

-

Early adoption of energy tracking tools, even for rough estimates

-

Right-sizing model choices to actual task requirements

Series A+:

-

Systematic energy monitoring across training and inference using established tools

-

Documented energy metrics (energy per inference, watt-hours per query) available for stakeholders

-

Active optimisation through techniques like quantisation (INT8/FP16), model distillation, or mixture-of-experts architectures

-

Selection of cloud providers/regions based on renewable energy availability and Power Usage Effectiveness (PUE)

-

Pre-Seed / Seed:

-

No awareness of model energy characteristics or efficiency trade-offs

-

Defaulting to largest available models without considering efficiency

-

No consideration of cloud region or data center energy sources

-

Assumption that energy consumption is irrelevant at early stage

-

No measurement or estimation of energy usage

Series A+:

-

No systematic energy monitoring or carbon tracking

-

Using oversized models where smaller, efficient alternatives exist

-

No documentation of energy consumption for customer or regulatory inquiries

-

Inability to provide energy metrics when requested by enterprise customers or investors

-

Energy and water-intensive AI models face growing regulatory scrutiny, reputational risk, and procurement barriers, especially in B2B markets where enterprise customers increasingly require sustainability metrics. More efficient models are also more cost-competitive long-term, directly impacting unit economics.

This is especially material with high-compute uses: e.g., video and image generation, and agentic use cases.

In November 2025, local opposition blocked the second attempt at a Microsoft data centre development in Wisconsin.

Research demonstrates that smaller models can achieve equal performance in agentic systems while using far less compute.

In September 2024, Google halted plans for a data centre in Chile over water concerns.

3.1 AI Failure & Information Quality Management

All stages:

1. What happens when your AI fails or produces incorrect information?

2. If agentic, how does your system manage cascading failures? How will you navigate liability?

3. How do users experience failures, and what safeguards prevent them from acting on wrong AI outputs?

-

Specific failure scenarios mapped to user experience to evaluate consequences

-

Feedback loops in place

-

Confidence scoring or verification workflows

-

-

Using AI in places it is not required for or manners it is not designed for

-

Purely technical answers without user impact consideration

-

No verification mechanisms

-

No considerations of the human actor susceptibility

-

AI "hallucinations" (confidently stated false information) create direct legal liability, user harm, and reputational damage. When AI systems produce incorrect information in high-stakes contexts, companies face lawsuits, regulatory penalties, and loss of user trust. Building appropriate uncertainty handling and fact-checking capabilities delivers competitive advantage through reliability.

Systems designed with confidence estimation, fact-checking capabilities, and proper usage contextualisation can deploy in higher-value, higher-stakes use cases where competitors' unreliable systems cannot operate.

A New York federal judge sanctioned lawyers who submitted a legal brief written by the artificial intelligence tool ChatGPT, which included citations of non-existent court cases.

In April 2024, New York City's chatbot gave bizarre advice, misstating local policy and advising businesses to break the law.

In 2024, Air Canada's chatbot gave a customer false information about bereavement travel discounts. When sued, Air Canada argued "liability rested with the chatbot itself, not the company" and claimed they "couldn't be held accountable for AI-generated responses." The court rejected this defence and held the company liable, demonstrating that attempting to deflect accountability to the AI system itself is not a valid legal strategy.

3.2 Bias Detection and Mitigation

All stages:

1. What are some sensitive bottlenecks in which your AI driven decision can harm users if biased?

2. What user groups may be effected by bias in your AI?

3. How did you test your AI for bias?

-

Specific biased scenarios mapped to user groups and use cases

-

Feedback loops in place

-

Bias and impact evaluations on top of performance metrics

-

-

Using AI in places it is not required for or manners it is not designed for

-

Poor identification of sensitive users groups

-

No bias assessments nor ongoing monitoring

-

Biased AI systems trigger legal liability under anti-discrimination laws, harm users, exclude companies from high-stakes industries, and erode trust. Fair, unbiased AI systems avoid costly corrections while enabling deployment across regulated sectors like hiring, lending, and healthcare.

Companies with robust pre-deployment auditing can demonstrate fairness to regulators and enterprise customers, accessing markets where competitors face legal barriers.

In May 2025, a federal court certified a class action lawsuit against Workday, whose third-party AI screening system discriminated based on age, race, and disability—with the court ruling that companies using Workday inherited liability as the AI acted as their "agent".

In 2024, SafeRent Solutions settled for $2.275 million after its tenant screening algorithm was found to discriminate against Black and Hispanic housing applicants. The core problem: SafeRent's "black box" algorithm was completely undisclosed — vendors refused to reveal what data was considered or how it was weighted. Landlords received only a score with no explanation and couldn't appeal decisions.

In 2018, Amazon scrapped it's AI recruitment tool that showed bias against women.

The COMPAS algorithm, used in US court systems to predict recidivism risk, was found to produce nearly twice as many false positives for Black defendants.

3.3 Pre-Deployment Evaluation & Testing

All stages:

1. Walk us through your testing process before deploying AI changes.

2. How do you validate that your AI system works correctly, safely and without bias before real users interact with it?

3. What scenarios do you test for?

4. Do you have sandbox (test) environments or limited user testing to catch issues between development and full deployment?

-

Systematic pre-deployment testing protocols

-

Testing for failure modes and edge cases

-

Sandbox environments and limited user pilots

-

Staged deployment strategies with feedback loops between phases

-

-

"We'll test it in production" mentality

-

Only technical performance testing (accuracy/speed)

-

No safety or edge case scenario testing

-

Binary thinking: either in dev or fully deployed

-

Red teaming exercises identify failure modes before deployment, reducing incident response costs and maintaining user trust. Without adversarial testing, companies discover vulnerabilities only when malicious actors exploit them.

AI systems often perform differently across demographic groups, geographies, and languages — creating both legal liability (disparate impact) and market limitations (reduced total addressable market).

Companies that rigorously test for hallucinations and audit bias can enter professional markets (legal, medical, financial advisory) where competitors' unreliable outputs create unacceptable risk.

In February 2024, Google released Gemini's image generation feature without adequate pre-deployment testing for historical contexts and edge cases. The feature produced historically inaccurate images and was paused within days. CEO Sundar Pichai admitted they lacked "robust evals and red-teaming" before launch.

3.4 Post-Deployment Monitoring & Incident Response

All stages:

1. How do you monitor your AI system's performance and behavior after it's live with users?

2. What happens when you discover the AI has made errors or caused problems, or acted in a bias manner, and how do you respond?

3. How do feedback loops from monitoring influence your development process?

-

Proactive monitoring for performance drift and safety issues

-

Clear incident escalation and response procedures

-

Feedback loops that inform future development and testing

-

-

No systematic monitoring beyond basic metrics

-

Reactive approach ("wait for user complaints")

-

Unclear incident response procedures

-

Treating monitoring as separate from development cycle

-

AI systems may only generate problematic outputs unexpectedly when released and used at scale. Additionally, models can degrade over time as real-world data drifts from training data. Without systematic monitoring, companies discover problems only when users complain or lawsuits are filed — by which time damage is done. Proactive monitoring catches issues early, reduces remediation costs, and maintains user trust.

Character.AI had no proactive monitoring to detect when its chatbot was having harmful conversations with vulnerable users. When 14-year-old Sewell Setzer expressed suicidal thoughts to the chatbot, the system asked if he "had a plan" and responded "That's not a good reason not to go through with it" — yet no alerts triggered and no intervention occurred. Character.AI only introduced new safety measures after the lawsuit was filed in October 2024.

3.5 Post-Deployment Monitoring & Incident Response

All stages:

1. What third-party AI services/models are you using?

2. How do you evaluate their reliability at the integration stage, and how do you plan to monitor change in terms or performance?

3. How easily can you switch between AI models? Can users choose their model?

Series A+:

1. How do you evaluate and monitor the responsible AI practices of your AI suppliers and partners?

2. What happens if a vendor fails your standards?

3. How easily can you switch between AI models? Can users choose their model?

-

Proactive monitoring for performance drift and safety issues

-

Clear incident escalation and response procedures

-

Feedback loops that inform future development and testing

-

-

No systematic monitoring beyond basic metrics

-

Reactive approach ("wait for user complaints")

-

Unclear incident response procedures

-

Treating monitoring as separate from development cycle

-

AI systems may only generate problematic outputs unexpectedly when released and used at scale. Additionally, models can degrade over time as real-world data drifts from training data. Without systematic monitoring, companies discover problems only when users complain or lawsuits are filed — by which time damage is done. Proactive monitoring catches issues early, reduces remediation costs, and maintains user trust.

Character.AI had no proactive monitoring to detect when its chatbot was having harmful conversations with vulnerable users. When 14-year-old Sewell Setzer expressed suicidal thoughts to the chatbot, the system asked if he "had a plan" and responded "That's not a good reason not to go through with it" — yet no alerts triggered and no intervention occurred. Character.AI only introduced new safety measures after the lawsuit was filed in October 2024.

4.1 User Transparency and Trust

All stages:

1. How do you communicate AI capabilities and limitations to users?

2. How are you thinking about building trust amongst customers?

-

Clear, upfront disclosure of AI usage in user interfaces

-

Plain language explanations of AI capabilities and limitations

-

Users can easily identify when they're interacting with AI

-

Implemented a comprehensive and accurate trust centre online for customers

-

Pursuing or achieved trust certifications

-

-

No explanation of what the AI can/cannot do

-

No disclosure of AI's role in critical decisions

-

Users can't distinguish AI-generated from human-generated content

-

Lack of knowledge of what constitutes trust in an AI system (see NIST characteristics of trustworthy AI)

-

Users who don't understand AI's role in decisions cannot make informed choices, creating liability exposure and trust erosion. Transparency about AI capabilities and limitations reduces liability when AI fails, builds user trust, and enables appropriate reliance.

Companies that clearly communicate AI's role and limitations build stronger user trust, face reduced liability when AI fails, and differentiate from competitors whose opacity creates user confusion.

In April 2024, New York City's chatbot gave bizarre advice, misstating local policy and advising businesses to break the law.

In 2024, Air Canada's chatbot gave a customer false information about bereavement travel discounts. When sued, Air Canada argued "liability rested with the chatbot itself, not the company" and claimed they "couldn't be held accountable for AI-generated responses." The court rejected this defence and held the company liable, demonstrating that attempting to deflect accountability to the AI system itself is not a valid legal strategy.

4.2 Human-AI Decision Boundaries

Pre-Seed / Seed:

1. Where exactly does human judgment end and AI decision-making begin in your product?

2. What decisions should never be fully automated, or what mitigation procesess do you have to ensure any such delegation of decision making to AI is done properly?

Series A+:

1. Show us how you've systematically implemented human-in-the-loop design across your product, or explain how you were able to avoid its necessity in risky automation decision points.

2. What governance processes ensure human oversight boundaries are maintained as you scale?

3. How do you train and support users in effective human-AI collaboration?

Pre-Seed / Seed:

-

Thoughtful human-in-the-loop design

-

Clear automation limits or roadmap

-

Domain expertise integration

Series A+:

-

Risk-based framework for automation vs. human oversight

-

Regular audits of oversight boundaries and effectiveness

-

Clear identification of automation risk bottlenecks

-

Pre-Seed / Seed:

-

Complete automation assumptions, without clear roadmap for safe delegation

-

Unclear or unthoughtful boundaries

-

No identification of safety bottlenecks for human oversight

Series A+:

-

Automation creep without formal approval process

-

One-size-fits-all approach regardless of risk level

-

Can't justify why high-stakes decisions are fully automated

-

Poor human-AI boundary design leads to automation failures, legal liability, and user harm. When AI makes decisions that should require human judgment — or when humans over-rely on AI outputs — both performance and outcomes suffer. Thoughtful boundary design is essential for deployment in high-stakes contexts.

Products with clear human-AI boundaries can be deployed in regulated industries (healthcare, legal, finance) where full automation is prohibited, while also achieving better outcomes through appropriate human oversight.

The submission of a legal brief that cited non-existent cases generated by ChatGPT demonstrates poor judgment of where AI decision boundaries begin and human discretion should start. Identifying these bottleneck in your product is the core of human centered design.

Research demonstrates that using AI risk assessment tools can increase racial bias among human decision-makers.

Studies show excessive dependence on AI led to significant declines in memory retention and critical thinking skills among students — demonstrating that poor human-AI design can harm human capabilities.

4.3 Human AI Interaction

All stages:

1. How do you see the human AI interaction designed in your product?

2. Is your AI integration supportive for human bias, machine bias, and biases that can stem from human and AI interactions?

-

Recognition that humans and AI both have bias vulnerabilities

-

Design that supports human agency and critical thinking

-

Regular evaluation of human-AI interaction patterns for bias detection

-

Transparent AI outputs that enable meaningful human oversight

-

-

No awareness that AI can amplify human biases

-

Assumption that human oversight automatically makes AI "safe"

-

No consideration of how humans might over-rely on AI outputs

-

No recognition that human-AI collaboration can create new bias patterns

-

Designing AI as black box that humans can't meaningfully oversee

-

Even well-performing AI can amplify human biases when interaction design is poor. Products that induce negative cognitive effects face reduced adoption in key sectors like education and professional services. Conversely, human-centered design creates sustainable product ecosystems with healthy usage patterns.

Green and Chan (2019) showed that even when the AI itself is performing well, when not supporting responsible contextualisation it can reinforce bias — where people using the risk assessing tool to inform there own judgment, they attribute higher risk predictions about black defendants and lower risk predictions about white defendants.

4.5 Explainability

Pre-Seed / Seed:

1. What records do you keep about your AI decisions?

2. If a customer asks 'why did the AI do X,' can you explain it?

3. In what bottlenecks in your product design such decisions' explanations are crucial?

Series A+:

1. Demonstrate your systematic approach to AI explainability across different user types and use cases.

2. How do you ensure explanations remain meaningful and actionable as your models become more complex?

3. What infrastructure supports explainability at scale?

Pre-Seed / Seed:

-

Basic logging and audit trail systems

-

Clear ability to trace decision reasoning

-

Understanding of when explanations matter most, thoughtful choice of AI solutions conditioned on decisions consequences

Series A+:

-

Proper identification of crucial sensitive decisions in the product

-

Varied AI stack that address performance \ explainability tradeoffs

-

User-centered explanation systems (different explanations for different roles)

-

Pre-Seed / Seed:

-

Inability to explain AI outputs to users

-

Using black-box algorithms inadequately

-

No clear identification of crucial decision points in the product

Series A+:

-

Blackbox models in sensitive decisions with no clear justification

-

Performance centric approach diminishing relevant explanations

-

Generic explanations regardless of user or context

-

Unexplainable AI systems face legal challenges, regulatory barriers, and user distrust. In high-stakes contexts (lending, hiring, healthcare), inability to explain decisions creates legal liability under anti-discrimination laws and blocks deployment. Explainable systems can be audited, debugged, and improved faster.

Companies with explainable AI can: (1) enter regulated markets requiring decision justification, (2) win enterprise contracts demanding audit capabilities, and (3) identify and fix bias faster than competitors with black-box systems.

The EU AI Act requires explainability for high-risk AI systems — companies without explanation capabilities will be excluded from European markets for lending, hiring, and other regulated applications.

SafeRent's tenant screening produced only a score with no explanation — landlords couldn't understand why applicants were rejected, and applicants couldn't appeal. This opacity enabled discrimination to persist undetected, ultimately leading to a $2.2 million settlement.

The COMPAS algorithm, used in US court systems to predict recidivism risk, was found to produce nearly twice as many false positives for Black defendants. In order to mitigate this risk, the system should have been interrogated for its decision-making process to eliminate biased decisions. This is much easier to detect and correct when systems are explainable.

4.4 Stakeholder Risk & Misuse / Malicious Use Prevention

Pre-Seed / Seed:

1. Who could be harmed if your AI makes systematic errors or is misused by bad actors?

2. What unintended use cases could emerge that you haven't designed for?

3. How would you detect these issues happening?

Series A+:

1. What monitoring systems do you have to detect malicious use patterns, and how do you respond when harmful use cases emerge?

Pre-Seed / Seed:

-

Identification of vulnerable populations

-

Proactive misuse scenario planning

-

Monitoring concepts for unusual patterns

Series A+:

-

Systematic misuse detection and prevention infrastructure

-

Clear abuse response procedures and escalation paths

-

Proactive threat modeling and red-teaming exercises

-

Regular updates to safeguards based on emerging misuse patterns

-

Pre-Seed / Seed:

-

Focus only on direct users

-

No monitoring plans

-

"We can't control how people use it"

-

Reactive approach

Series A+:

-

No proactive misuse detection systems

-

"We can't control how people use it" mentality at scale

-

No systematic process for updating safeguards

-

AI systems can be weaponised by malicious actors for influence operations, scams, harassment, and fraud—creating platform abuse, regulatory scrutiny, and reputational damage. Companies with robust misuse prevention can: (1) avoid platform abuse that degrades user experience, (2) demonstrate safety to regulators, and (3) maintain trust when competitors face scandals.

In April 2025, researchers from the University of Zurich deployed AI bots on Reddit without consent. The study found AI-generated comments were six times more persuasive than human ones—demonstrating that AI can be weaponized for manipulation at scale

Character.AI had no proactive monitoring to detect when its chatbot was having harmful conversations with vulnerable users. When 14-year-old Sewell Setzer expressed suicidal thoughts to the chatbot, the system asked if he "had a plan" and responded "That's not a good reason not to go through with it" — yet no alerts triggered and no intervention occurred. Character.AI only introduced new safety measures after the lawsuit was filed in October 2024.

Anthropic's 2025 report "Detecting and Countering Malicious Uses of Claude" documented how malicious actors leverage frontier AI models to scale influence operations, automate credential abuse, enhance scams, and generate malware.